The newest preview of Geekbench for ML workloads is now here, delivering several improvements in our testing methodology for even more accurate measurement of real-world performance, as well as support for three entirely new platforms: Geekbench ML is now available on PC, Mac, and Linux. As companies continue to deliver newer, faster, and better AI systems and features, our updated frameworks and models make it possible to compare ML performance across devices and platforms with Geekbench’s well-known usability.

This new preview is available on iOS at the Apple App Store, on Android through the Google Play Store, and newly available for macOS, Windows, and Linux through our downloads page.

New Platforms

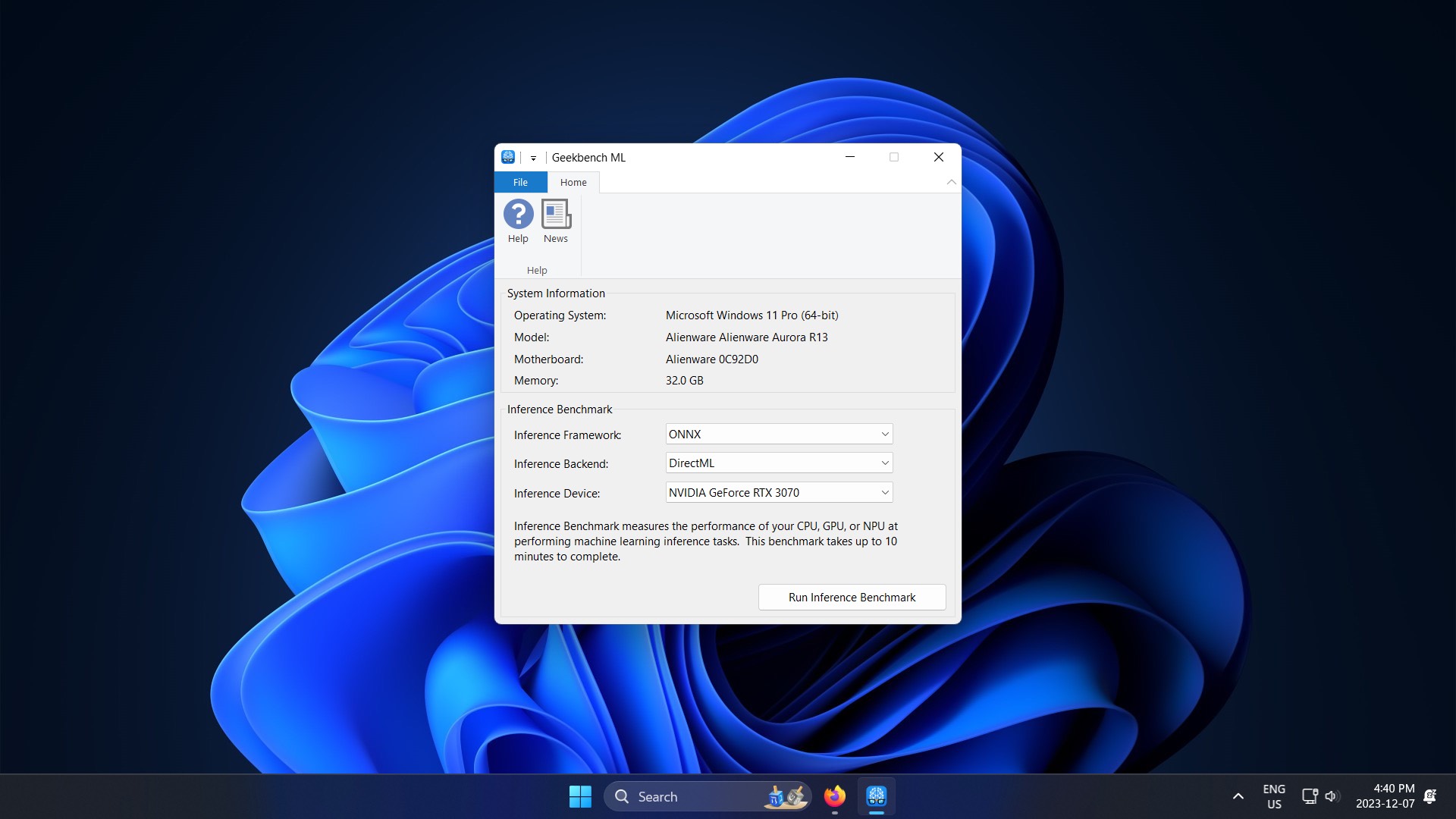

AI and ML-related workflows aren’t just confined to mobile, and hardware architecture on desktop and laptop devices is changing to accommodate this shift in computing. With this latest 0.6 preview, Geekbench ML now supports Windows, macOS, and Linux. This means you’ll be able to see how machine learning-powered tasks run on your desktop, laptop, or even a server — whether it has new AI-specific hardware or not. And, as always, our models and data sets are identical across all supported platforms, making scores comparable.

New Frameworks

Developers don’t typically work directly on bare hardware in assembly — abstraction layers and frameworks simplify the process. Geekbench ML 0.6 upgrades our internal version of TensorFlow Lite, supporting newer models and improved performance on Android hardware with NPUs using NNAPI. On Windows, we support ONNX with DirectML CPU and GPU support. And on iOS and macOS, Geekbench ML 0.6 uses Core ML directly, executing models with the CPU, GPU, or Neural Engine.

All this combines to mean that Geekbench ML 0.6 uses newer, better frameworks that support more up-to-date models and faster performance on newer hardware. On iOS, for example, the switch to Core ML better reflects modern app use cases, as developers targeting the platform are less likely to use TensorFlow Lite. These changes together better illuminate real-world AI machine learning and performance on your device.

New Workloads

To more accurately reflect the kinds of tasks performed by your apps and software, Geekbench ML 0.6 includes three entirely new workloads.

The new Depth Estimation workload reflects the advancements made in AI-powered photography used in software-assisted portrait mode effects. Our model generates an image which “maps” where each pixel corresponds to an estimate of depth for that location in the original image. Depth data for each point on the image in this type of workload is used by camera software effects to programmatically add enhancements like subject isolation, blur, and other effects, reflecting real-world ML applications.

Our new Style Transfer workload reflects generative AI use cases in popular apps, like those that generate a photo of you as if a specific artist painted or drew it. Our model takes an original content image and a style reference image and blends them together, producing a modified version of the original image in the artistic aesthetic of the style reference image.

AI-powered super-resolution image upscaling is used in many ways — for example, it can be part of other ML object classification workloads, and it can enhance performance and visual fidelity in the games that we play on our devices. Geekbench ML 0.6 delivers a new Image Super-Resolution workload to reflect these kinds of increasingly common use cases. This workload enhances and upscales an image by a 4x factor, increasing image resolution and dynamically drawing out and inferring details that are otherwise obscured in the original.

Improved Workloads

We have upgraded all of our quantized models from Quantization Aware Training to Post-Training Integer Quantization. The newer models result in improved performance and accuracy, reflecting new industry standards for ML applications.

Additional Information

Longer and more complete descriptions of these and other workloads and models included in this preview release are available in the Geekbench ML 0.6 Inference Workloads document. All of our tests are based on well-understood and widely used machine learning and AI industry standards for accurate testing methodology.

Geekbench Browser

Geekbench ML is integrated with the Geekbench Browser, so you can compare scores in this latest preview with other devices, see top performers on the Geekbench ML Benchmark Chart, or check out the most recent results in the Latest Geekbench ML Inference Results page.

Still a Preview

These new platforms, workloads, and models join a suite of other cross-platform tests already included in Geekbench ML 0.6, including Image Classification, Object Detection, Machine Translation, Face Detection, and Text Classification. However, this is still a preview release. Geekbench ML 0.6 scores cannot be compared to the prior Geekbench ML 0.5 release, and we expect further adjustments to our testing in the future based on community feedback — join our Discord for input.

Together, the tests in Geekbench ML 0.6 accurately represent current AI and ML use cases across mobile and desktop. Geekbench ML provides a much-needed point of reference as these kinds of features become increasingly relevant to the new ways that we use our devices.

The ML and AI industry has grown and changed drastically in the four years since work began on Geekbench ML. The rapid pace of improvement and research makes performance comparison even more important, although challenging. We’re taking our time to get things right, and Geekbench ML 0.6 is close to what we would like to deliver in our upcoming 1.0 release, which we plan to have available in 2024.

In the meantime, we invite you to download Geekbench ML 0.6 for iOS, Android, and Desktop.